From Zero to App Store in 47 Days

Discover how a solo engineer transformed an idea into the Favory app—a social wishlist platform—within just 47 days, navigating challenges and tech choices along the way.

Introduction

47 days. Just enough time to finish one (maybe two if you watch it like me) Netflix series. Enough time to go through one work sprint, which mostly gets derailed by meetings. In 47 days, most products don't even have a name.

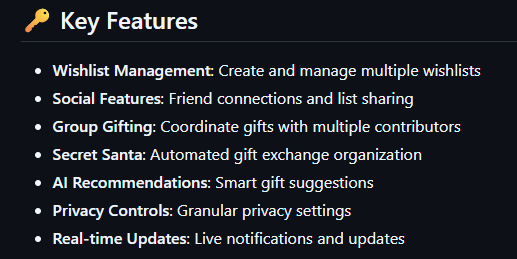

47 days is how long it took to go from a blank repository to a fully (well, mostly fully) deployed social wishlist app—complete with AI gift idea generation, collaborative wishlists, and an automated Secret Santa mode that actually works.

This is a story of going from idea to building the app, Favory, a project where I served as Head Engineer (sole engineer) for a majority of the start of the project. No team to delegate to. No six-month ramp-up. Just an idea, a lot of coffee, and a lot of ambition.

If you have ever wondered what it looks like to ship a full-stack (mobile app and website) product under pressure—the tech decisions, the tradeoffs, the things that seemed to break without explanation or apology at 3 AM—this is that story.

Completed app development in 47 days from scratch. →

Built Favory to simplify gift-giving with social features. →

Used React 18 with TypeScript for frontend development. →

Integrated OpenAI API for AI-powered gift recommendations. →

Implemented Redux Toolkit for predictable client-side state management. →

Utilized PostgreSQL with Prisma ORM for database management. →

Employed React Query for efficient server state management. →

Focused on user-friendly features to enhance gift selection experience. →

The Context

Gift-giving is a liminal void between wanting to get something meaningful but also saying "maybe another gift card to Chipotle will suffice."

You try to ask what they want and they respond with "oh nothing." Or, you have to think of something for your mom who already has everything. You start hunting, searching online for "what gifts to give someone who already has three crockpots" or scour their Amazon Wishlist which hasn't been updated since 2018. Nothing.

Favory was built to fix this. The idea was born a few years back by my aunt who suggested I make something like it. The idea: make a website or an app where people can maintain their lists, and make it enjoyable to do so with social features. A place where families and friends can easily see when something is marked as owned (so you don't get your aunt Michelle another crockpot (you can only make so many roasts at once)). Somewhere Secret Santa isn't just a spreadsheet—a hassle to manage—and the one friend who’s the emotional glue of the group can stay sane.

My Role? Head Engineer.

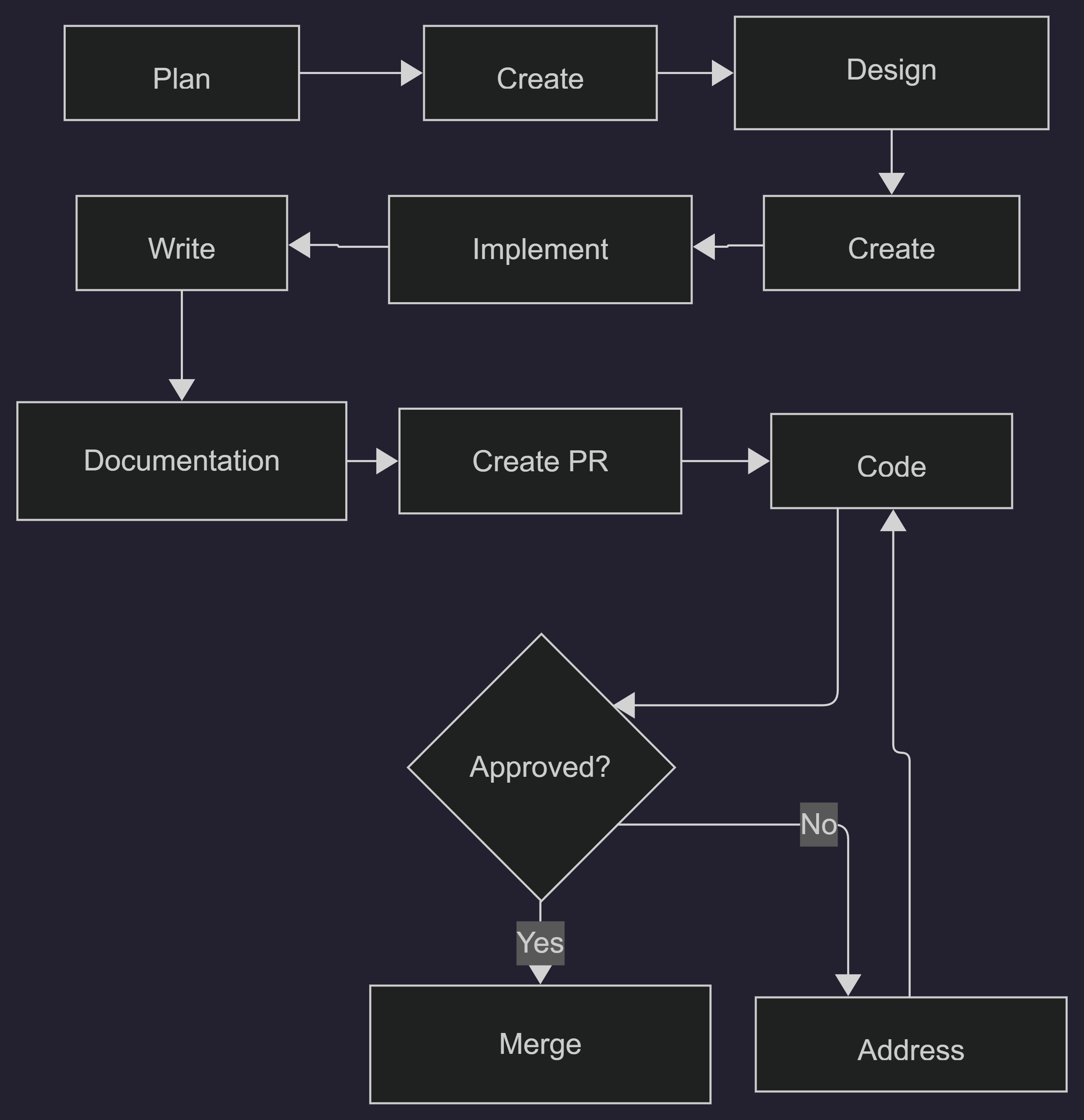

To be clear, for the first 47 days of the project, I did everything.

From design and ideation to crying silently to myself. All the way to frontend, backend, database migrations, API integration, and deployment. I was the one who was silently whispering into the air about what it means to give a gift that means something.

This was a moment where I questioned every decision I made. There wasn't anyone else to discuss what was going on, why a bug persisted even after deleting the entire file, or what stack to use.

This wasn't some sort of side project that could just be discarded. This had real stakes, real cash invested, and a real deadline to meet, and the expectation that real users would use this tool consistently. With the holiday season rapidly approaching, the time to complete this project was vital. This also wasn't a light project; it had real consequences if executed poorly. The platform would be available to minors (because they need gifts too), which added an extra layer of responsibility: putting proper protections in place and complying with GDPR and COPPA.

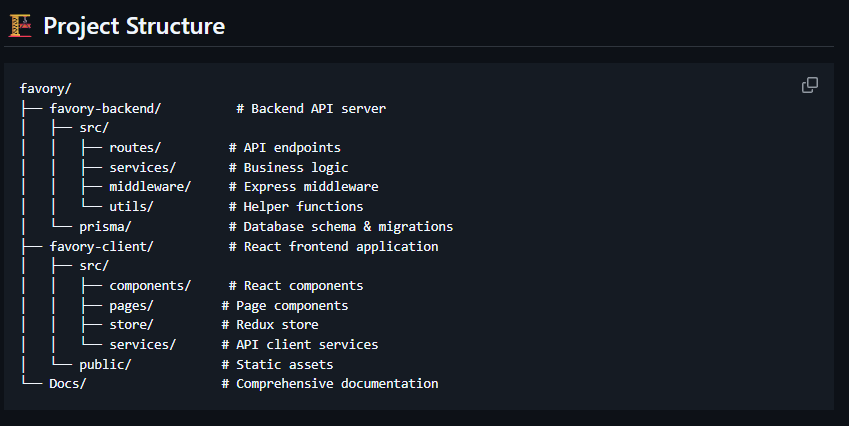

Tech Stack Overview

When you're the only engineer and the clock is looming over you, every technical choice meant something. Picking the right tools that avoided limitation but still were familiar enough to get the job done were vital. I couldn't risk losing usability, but I couldn't waste days on endless pages of documentation. It was a sprint of strategic positioning.

Here is what I went with:

Frontend

Framework: React 18 with TypeScript

State Management: Redux Toolkit

Styling: Styled Components

UI Library: Ant Design

Build Tool: Vite

Testing: Jest & Cypress

Backend

Runtime: Node.js with TypeScript

Framework: Express.js

Database: PostgreSQL with Prisma ORM

Authentication: JWT with refresh tokens

Real-time: Socket.io

Cache: Redis

Storage: AWS S3

Database

PostgresSQL

Using Supabase

AI Integration

OpenAI API

Hosting & Deployment

Github

Render

Supabase

AWS

Redis

One decision worth noting is my approach to state management on the frontend.

I considered relying on React Context and local state, or using a lightweight store, because they're quicker to set up and require less boilerplate upfront. For a small app, that can work well.

But ultimately I went with Redux Toolkit for client-side state. In a 47-day build I needed predictable data flow, strong TypeScript expectations, and tooling that would hold up as features stacked up quickly. Redux Toolkit gave me the structure without getting in the way, which helped the app grow to what it needed to be.

For server state, I reached for React Query (TanStack Query). This was a game-changer. Instead of writing endless useState + useEffect patterns for API calls, React Query handles caching, background refetching, and error states out of the box. It meant I could focus on building features instead of debugging stale data bugs.

import { QueryClient } from '@tanstack/react-query';

import { toast } from 'sonner';

export const queryClient = new QueryClient({

defaultOptions: {

queries: {

staleTime: 5 * 60 * 1000, // Data stays fresh for 5 minutes

gcTime: 10 * 60 * 1000, // Cache persists for 10 minutes

retry: (failureCount, error) => {

if (error?. response?.status === 429) return false; // Don't retry rate limits

if (error?.response?. status >= 400 && error?.response?. status < 500) return false;

return failureCount < 3;

},

refetchOnWindowFocus: false, // Reduce unnecessary API calls

refetchOnReconnect: true,

},

mutations: {

onError: (error) => {

const message = error?.response?.data?.message || 'Something went wrong';

toast.error(message);

},

},

},

});

// And a query key factory to keep cache invalidation realistic

export const queryKeys = {

auth: {

user: () => ['auth', 'user'],

},

lists: {

all: () => ['lists'],

mine: () => ['lists', 'mine'],

detail: (id) => ['lists', 'detail', id],

},

items: {

byList: (listId) => ['items', 'byList', listId],

comments: (itemId) => ['items', 'comments', itemId],

},

friends: {

all: () => ['friends'],

requests: () => ['friends', 'requests'],

},

notifications: {

all: () => ['notifications'],

unread: () => ['notifications', 'unread'],

},

};

// And a component that needs lists just calls useMyLists() and gets loading states, error handling and caching for free

function MyComponent() {

const { data: lists, isLoading, error } = useMyLists();

if (isLoading) return <Loader />;

if (error) return <Error />;

return <ListGrid lists={lists} />;

}Under the Hood

Every app has a feature list. This section shows which features made it into the repo, the challenges of building them, and what actually worked.

Feature 1: AI-Powered Gift Recommendations

The idea was simple: tell Listo (Favory's AI bot powered by OpenAI) a little bit about someone from their public lists and have it suggest something that they might actually like, either from the list itself or a generated idea.

The reality is messier.

The Challenge

AI recommendations are only as good as the context you give them. Too little information and they end up giving the user generic suggestions (hint hint the crockpot from earlier). But too much information and you're asking the user to write a 700 word soliloquy about what someone could want, which they will give up on halfway through.

I needed a system that could give genuine recommendations from as little input as possible from the user--a few ideas, maybe some past items they have requested, and a price range.

The Approach

The gift recommender uses a multi-layered approach:

Privacy-respecting signal aggregation from public data

Heuristic candidate generation based on interests/categories

LLM re-ranking for personalization

Real-time product search for purchase links

The key insight was that the system prioritizes items already on the person's lists (as the highest-confidence signal), then looks at their publicly visible interests. It is restricted to only public data as the user has listed it (specifically walled off from a user's private lists). This approach ensures recommendations are privacy-first and actually more trustworthy.

private async aggregateSignals(requesterId: string, targetUserId: string) {

const signals = {

publicLists: [],

likedItems: [],

actualListItems: [],

tags: new Set<string>(),

categories: new Set<string>(),

interests: []

};

// Check friendship status to determine visibility level

const friendship = await prisma.friendship.findFirst({

where: {

OR: [

{ user1Email: requesterUser.email, user2Email: targetEmail },

{ user1Email: targetEmail, user2Email: requesterUser.email }

],

status: 'active'

}

});

const isFriend = !!friendship;

// Only access lists based on visibility rules

const visibleLists = await prisma.favoriteList.findMany({

where: {

createdBy: targetEmail,

visibility: {

in: isFriend ? ['public', 'friends'] : ['public'] // Key privacy gate

},

isArchived: false

},

// ... extract items, categories, tags

});

return signals;

}const prompt = `You are a thoughtful gift recommendation assistant. Based on the

following information about a friend (respecting their privacy), rank and enhance

these gift candidates.

Context:

- Occasion: ${occasion || 'General gift-giving'}

- Budget: $${budgetMin}-$${budgetMax}

- Friend's interests from PUBLIC data: ${signals.interests.join(', ')}

- Categories they like: ${signals.categories.join(', ')}

- Tags from their public lists: ${signals.tags.slice(0, 10).join(', ')}

${context.theme ? `- THEME REQUIREMENT: ${context.theme}` : ''}

Gift Candidates to rank:

${candidates.map((c, i) => {

const source = c.sourceType === 'actual_list_item'

? ` [FROM THEIR LIST: ${c.listName}]` : '';

return `${i + 1}. ${c.title} ($${c.priceMin}-$${c.priceMax})${source}`;

}).join('\n')}

Important:

- Include a MIX of both items from their lists AND AI-generated suggestions

- Items marked [FROM THEIR LIST] should be marked with "isFromList": true

- Only reference information from PUBLIC lists and profiles.`;The trick wasn't teaching the AI itself (OpenAI had already done that)--it was teaching the AI what NOT to know. By limiting data it made gift recommendations helpful instead of invasive and creepy. Nobody wants to open a gift and think "how the hell did they know about this?"

Feature 2: Universal Wishlist Import

It is a headache to switch to "just another app." Nobody wants to start from scratch again. And nobody wants to waste time setting up their profile if the app ends up being something that just sits on their phone until it takes up too much space so they delete it. If someone already has an Amazon list, or Pinterest board, or general list saved on their phone hidden in their notes app, I designed Favory to just pull them in and set up the list for them.

The Challenge

Every system structures their data differently. Some have clean APIs with documentation to help you bring in the data. Most don't (I am looking at you [insert retailer that you think I am talking about]). Favory is dealing with inconsistent URLs, jumbled JSON files, products that don't exist, and rate limiting.

The Approach

Three-tier extraction system:

Browser extension that reads product page metadata (Open Graph, JSON-LD)

Backend integration with official product data APIs (via RapidAPI)

AI-powered fallback for sites without structured data

Edge cases were the real problem here. Amazon using shortened URLs at random (specifically from the app) was the first curveball. There are at least four URL patterns for sites like that. They also redirect all over the place and end up somewhere in the void that Favory couldn't parse easily.

The fun secret of a 'universal' import is that there is never anything that is universal about it. Everyone is a unique snowflake in an avalanche of imports. The solution wasn't some complex algorithm - it was accepting that I needed site specific requirements in order to get the data I needed, and used AI as a fallback when needed

// Browser extension - extracting data sites WANT to share (for SEO/social)

function extractStructuredData() {

const data = {

name: '',

description: '',

price: '',

image_url: '',

url: window.location.href

};

// 1. Try JSON-LD (structured data for search engines)

const jsonLdScript = document.querySelector('script[type="application/ld+json"]');

if (jsonLdScript) {

try {

const jsonLd = JSON.parse(jsonLdScript.textContent);

// Handle both single object and array formats

const product = Array.isArray(jsonLd)

? jsonLd.find(item => item['@type'] === 'Product')

: (jsonLd['@type'] === 'Product' ? jsonLd : null);

if (product) {

data.name = product.name || '';

data.description = product.description || '';

data.image_url = product.image?.[0] || product.image || '';

// Price can be nested in offers

if (product.offers) {

const offer = Array.isArray(product.offers)

? product.offers[0]

: product.offers;

data.price = offer.price ? `$${offer.price}` : '';

}

}

} catch (e) {

// JSON-LD parsing failed, continue to fallbacks

}

}

// 2. Try Open Graph tags (data sites publish for social sharing)

if (!data.name) {

data.name = document.querySelector('meta[property="og:title"]')

?.getAttribute('content') || '';

}

if (!data.description) {

data.description = document.querySelector('meta[property="og:description"]')

?.getAttribute('content') || '';

}

if (!data.image_url) {

data.image_url = document.querySelector('meta[property="og:image"]')

?.getAttribute('content') || '';

}

// 3. Standard meta tags as last resort

if (!data.name) {

data.name = document.querySelector('title')?.textContent?.trim() || '';

}

if (!data.description) {

data.description = document.querySelector('meta[name="description"]')

?.getAttribute('content') || '';

}

return data;

}Favory's browser extension reads product metadata that sites publish for search engines and social media previews (Open Graph tags, JSON-LD structured data). For major retailers, we use licensed third-party APIs for accurate product information. The extension operates client-side, helping users save items to their personal wishlists - similar to browser bookmarks, but with richer product details.

// Backend - using legitimate product data APIs for enrichment

async extractProductData(url: string): Promise<ProductData> {

const domain = new URL(url).hostname;

// For major retailers, use official/licensed data APIs

if (domain.includes('amazon.com')) {

return await this.fetchFromAmazonAPI(url);

}

// Fall back to AI extraction for other sites

return await this.extractWithAI(url);

}

async fetchFromAmazonAPI(url: string) {

// Extract product identifier from URL

const asinPatterns = [

/\/dp\/([A-Z0-9]{10})/i,

/\/gp\/product\/([A-Z0-9]{10})/i,

];

let asin = null;

for (const pattern of asinPatterns) {

const match = url.match(pattern);

if (match) { asin = match[1]; break; }

}

if (!asin) {

throw new Error('Could not identify product');

}

// Use RapidAPI's licensed Amazon data service

const response = await axios.get(

'https://real-time-amazon-data.p.rapidapi.com/product-details',

{

params: { asin, country: 'US' },

headers: {

'X-RapidAPI-Key': process.env.RAPIDAPI_KEY,

'X-RapidAPI-Host': 'real-time-amazon-data.p.rapidapi.com'

}

}

);

return {

name: response.data.data.product_title,

price: response.data.data.product_price,

image_url: response.data.data.product_photo,

description: response.data.data.about_product?.slice(0, 3).join(' | ')

};

}// When structured data isn't available, use AI to interpret the page

async extractWithAI(url: string) {

// Fetch page content

const response = await axios.get(url, {

headers: { 'User-Agent': 'Mozilla/5.0...' },

timeout: 10000

});

const $ = cheerio.load(response.data);

// Gather all available metadata

const pageData = {

title: $('title').text(),

metaDescription: $('meta[name="description"]').attr('content'),

ogTitle: $('meta[property="og:title"]').attr('content'),

ogDescription: $('meta[property="og:description"]').attr('content'),

ogImage: $('meta[property="og:image"]').attr('content'),

// Get visible text (truncated to avoid token limits)

bodyText: $('body').text().replace(/\s+/g, ' ').trim().substring(0, 2000)

};

// Ask AI to extract structured product info

const completion = await openai.chat.completions.create({

model: 'gpt-3.5-turbo',

messages: [{

role: 'system',

content: 'Extract product information from webpage metadata. Return JSON.'

}, {

role: 'user',

content: `URL: ${url}

Title: ${pageData.title}

OG Title: ${pageData.ogTitle}

Description: ${pageData.metaDescription || pageData.ogDescription}

OG Image: ${pageData.ogImage}

Page excerpt: ${pageData.bodyText}

Return: { "name": "...", "description": "...", "price": "...", "image_url": "..." }`

}],

temperature: 0.2 // Low temperature for consistent extraction

});

return JSON.parse(completion.choices[0]?.message?.content || '{}');

}Feature 3: Secret Santa Automation

We all have that friend (they are the best kind of friend) who puts together the group hangouts, and at Christmas time organizes the secret Santa using spreadsheets, word docs, secret group chats, and the last two braincells they have after having to deal with the chaos of their own Christmas.

The idea was simple, offload their work into an algorithm that saves their time, sanity, and energy. On paper it was simple, in code it was another story.

The Approach

Constraint-based matching using Fisher-Yates shuffle with validation:

Collect constraints (self-match prevention, couple exclusions)

Shuffle and attempt assignment

Validate against all constraints

Retry up to maxRetries if validation fails

The algorithm ensured backup assignments for everyone so if someone drops out after assignments are made it doesn't have to be completely regenerated.

export interface SecretSantaConstraints {

noSelfMatch: boolean; // Nobody draws themselves

coupleExclusions: string[][]; // Array of email pairs that can't match

evenPriceDistribution: boolean; // Spread budget ranges evenly

maxRetries: number; // How many times to try before giving up

}

// Get valid giftees for a specific gifter

private static getValidGiftees(

gifter: GroupMemberData,

availableGiftees: GroupMemberData[],

constraints: SecretSantaConstraints

): GroupMemberData[] {

return availableGiftees.filter(giftee => {

// Rule 1: No self-match

if (constraints.noSelfMatch && gifter.userEmail === giftee.userEmail) {

return false;

}

// Rule 2: Couple exclusions (spouses, roommates, etc.)

if (constraints.coupleExclusions.length > 0) {

const isExcluded = constraints.coupleExclusions.some(couple =>

(couple[0] === gifter.userEmail && couple[1] === giftee.userEmail) ||

(couple[1] === gifter.userEmail && couple[0] === giftee.userEmail)

);

if (isExcluded) return false;

}

return true;

});

}// Fisher-Yates shuffle with constraint checking

private static attemptAssignmentGeneration(

members: GroupMemberData[],

constraints: SecretSantaConstraints,

priceRanges: PriceRange[]

): SecretSantaAssignment[] {

const assignments: SecretSantaAssignment[] = [];

const availableGiftees = [...members];

const shuffledGifters = this.shuffleArray([...members]);

for (let i = 0; i < shuffledGifters.length; i++) {

const gifter = shuffledGifters[i];

const validGiftees = this.getValidGiftees(gifter, availableGiftees, constraints);

if (validGiftees.length === 0) {

throw new Error(`No valid giftees for ${gifter.userEmail}`);

// This triggers a retry with a fresh shuffle

}

// Random selection from valid options

const randomIndex = Math.floor(Math.random() * validGiftees.length);

const selectedGiftee = validGiftees[randomIndex];

// Generate backup in case someone drops out

const backupGiftees = validGiftees.filter(g => g.id !== selectedGiftee.id);

const backupGiftee = backupGiftees.length > 0 ? backupGiftees[0] : undefined;

assignments.push({

gifterEmail: gifter.userEmail,

gifteeEmail: selectedGiftee.userEmail,

priceRange: constraints.evenPriceDistribution ? priceRanges[i] : undefined,

backupGifteeEmail: backupGiftee?.userEmail

});

// Remove selected from pool

const gifteeIndex = availableGiftees.findIndex(g => g.id === selectedGiftee.id);

availableGiftees.splice(gifteeIndex, 1);

}

return assignments;

}private static async generateValidAssignments(

members: GroupMemberData[],

constraints: SecretSantaConstraints

): Promise<SecretSantaResult> {

let retryCount = 0;

while (retryCount < constraints.maxRetries) {

try {

const assignments = this.attemptAssignmentGeneration(members, constraints);

// Validate ALL constraints are satisfied

if (this.validateAssignments(assignments, constraints)) {

return { success: true, assignments, retryCount };

}

} catch (error) {

// Assignment failed (probably ran out of valid giftees)

// Shuffle and try again

}

retryCount++;

}

return {

success: false,

error: `Failed after ${constraints.maxRetries} attempts`,

assignments: []

};

}API Endpoints - Privacy Protection: secret-santa.ts (routes)

// Only showing users their own assignment

router.get('/groups/:groupId/my-assignment', authenticateToken, async (req, res) => {

// User only sees WHO they're buying for, never who's buying for them

const member = await prisma.groupMember.findFirst({

where: {

groupId,

userEmail: req.user.email // Only YOUR assignment

},

include: {

assignedGiftee: {

include: {

user: { include: { publicProfile: true } }

}

}

}

});

// Return giftee info + their questionnaire answers

res.json({

assignment: {

giftee: {

name: member.assignedGiftee.user.publicProfile?.fullName,

profileImageUrl: member.assignedGiftee.user.publicProfile?.profileImageUrl

},

answers: memberAnswers?.answers || {} // "What's your favorite color?" etc.

}

});

});

// Only the group OWNER can see all assignments (for troubleshooting)

router.get('/groups/:groupId/assignments', authenticateToken, async (req, res) => {

if (group.ownerEmail !== req.user.email) {

return res.status(403).json({ error: 'Only group owner can view all assignments' });

}

// ...

});Debugging Secret Santa at 1 AM hits different when you realize your algorithm just assigned someone to themselves three times in a row.

(Frustrated) Tyler Kurt Gordon

Secret Santa is a graph mapping problem dressed as a Christmas tree. Each person is a node, all valid assignments are the edges, and you have to have perfect matching. The constraints themselves remove edges. But if you remove too many then no valid matches exists. (The best part is writing an error message for when someone creates a mathematically impossible matching group.)

Error [Math is Broken]: Please stop making my algorithm chase it's tail like a sad dog.

Bonus Feature: Because I think it is cool

The secret Santa feature allows the organizer to request specific questions to be asked of each user so that everyone could see what Bob's favorite candy bar is, or what Sally's shoe size is. It made it personal and gave the gift giver ideas and allowed the organizer to kind of set a price range without explicitly saying so.

The secret Santa feature is actually a sub feature of the groups feature. A group can be made with custom questions (think for teams), and then the organizer can turn on secret Santa mode if desired.

The Honest Part

Okay, okay, okay... I know... You are thinking "wow, Tyler is clever but lying by omission. 47 days of solo development is possible but wild." Which is all true. 47 days of development means 47 days of muttering to yourself while it feels like everything is falling apart (which let's be honest... a lot did.)

Here is what actually went wrong and what I would do differently.

The Time I Wrote 15+ Different Rate Limiters

Here is what happened: I had a simple goal of limiting the number of requests to the backend API for each user. Save some money and ensure that the backend wasn't getting locked up every time a single user decided to add "Monkey Socks" to their list.

Users started to complain about being locked out of even being able to add their "Monkey Socks", getting error messages blaming them for trying to bombard the server with requests.

I had to fix this! And fix this fast. Those Monkey Socks weren't going to add themselves. I had to add per-endpoint limiters with different thresholds. This way a user could add a lot of items to their lists, use AI appropriately (so my OpenAI account wouldn't deplete faster than the Great Salt Lake), and notifications wouldn't get sent every millisecond. With every line of code I was getting tired. Oh a rate limiter for data export? Oh another one for searching? Oh don't forget the one for support tickets... This was becoming unmanageable.

The following code explains the solution, before and after.

// From rateLimiting.ts - 15 different limiters for different scenarios

export const apiLimiter = rateLimit({...}); // General API

export const authLimiter = rateLimit({...}); // Authentication (strictest)

export const uploadLimiter = rateLimit({...}); // File uploads

export const searchLimiter = rateLimit({...}); // Search (most lenient)

export const aiLimiter = rateLimit({...}); // AI endpoints (cost control)

export const notificationLimiter = rateLimit({...});

export const adminLimiter = rateLimit({...});

export const privacyLimiter = rateLimit({...});

export const dataExportLimiter = rateLimit({...}); // Only 2 per day!

export const accountDeletionLimiter = rateLimit({...}); // Only 1 per hour!

export const supportLimiter = rateLimit({...});

export const activityFeedLimiter = rateLimit({...});

export const activityAggregationLimiter = rateLimit({...});

export const aiDuplicateDetectionLimiter = rateLimit({...});

export const listoChatLimiter = rateLimit({...});

// The FIX comments tell the story

const defaultValidateConfig = {

trustProxy: false, // Disable trust proxy validation for cloud environments

xForwardedForHeader: false, // Disable X-Forwarded-For validation

};

// NaN checks added after production bugs

windowMs: Number.isNaN(parseInt(process.env.RATE_LIMIT_WINDOW_MS || '900000'))

? 900000

: parseInt(process.env.RATE_LIMIT_WINDOW_MS || '900000'), // FIX: added NaN checkRate limiting is a UX problem AND security problem. What might feel "safe" from a security perspective becomes limiting, punishing, and frustrating from a user's perspective. Finding the balance came from actual user feedback, not just my anxious mind trying to guess numbers.

Scope Creep (Even when you are the ONLY stakeholder)

When you are building something, every idea feels like a lead to chase down. The problem is that one idea spawns twenty new bugs and five more ideas like a hydra that keeps multiplying. No one is there to tell you "okay, maybe we don't need coffee delivery service added to support tickets."

I caught myself chasing down worthy leads, specifically when it came to GDPR and CCPA/COPPA compliance. This is not something I took lightly. People on the internet... are... well, the worst. I am not trying to alienate my users, but honestly, do you need to post an adult item on your wishlist titled "20F Looking"?

Minors on the app are a risk, not just from a legal perspective but from a personal perspective. My intention was to NEVER have a minor hurt by my code, other users, or automation. So I scheduled cron jobs for daily, weekly, and even hourly monitoring. Although a worthy effort (and needed in some extent) I chased it down a rabbit hole that became too much for a simple wishlist app without compromising baseline protections.

The following code is an example of how far I took this. Exploring edge cases that used if statements like they were motivational speakers saying, "I couldn't hear you" after asking you to say, "good morning."

// From contentModeration.ts - Look how nuanced this had to become

// Context-dependent topics that need nuanced handling

const CONTEXT_SENSITIVE_TOPICS = [

// Alcohol in appropriate contexts

{ topic: 'wine', allowedContexts: ['picnic', 'dinner', 'party', 'celebration',

'date', 'wedding', 'anniversary', 'tasting', 'pairing', 'gift', 'holiday',

'thanksgiving', 'christmas'] },

{ topic: 'champagne', allowedContexts: ['celebration', 'wedding', 'anniversary',

'new year', 'graduation', 'promotion', 'birthday', 'brunch', 'toast'] },

// Special handling for common false positives

// "Cocktail" in food contexts (shrimp cocktail, fruit cocktail)

if (wordLower === 'cocktail' && (context.includes('shrimp') ||

context.includes('fruit'))) {

return true; // Don't flag "shrimp cocktail" as alcohol

}

// "Spirits" in non-alcohol contexts (team spirits, holiday spirits)

if (wordLower === 'spirits' && (context.includes('team') ||

context.includes('holiday') || context.includes('good'))) {

return true; // Don't flag "holiday spirits" as alcohol

}I was writing a wishlist app that IS innocent. Then people started to pop up on the platform posting lists that shouldn't even exist in a human mind. By week three I was writing a content moderation system that could distinguish 'shrimp cocktail' from 'whiskey cocktail.' Is that essential for a wishlist app? Probably not. Did I spend four days on it? Yep.

The lesson wasn't in the code, or deciding what cocktail meant on a philosophical level - it was learning to recognize when something was an imaginary problem instead of shipping.

3AM Bugs That Kept Keurig in Business Single Handedly

Some bugs only decide to show up after you have pushed to Github, tested in a dev environment, pushed the update to the App Store and Play Store. Your heart drops, your mind starts to spin, and your stomach tightens. You think "how... where did I go wrong?"

I have a few code examples to show those bugs. Take a look at them, some of them are funny, some are tragic realities, and some haunt me to this day.

// From prisma.ts - Connection handling that was clearly added after failures

process.on('uncaughtException', (error) => {

console.error('Uncaught Exception:', error);

// Don't exit on database connection errors

if (error.message?.includes('Connection reset') ||

error.message?.includes('ECONNRESET')) {

console.error('Database connection error detected, attempting recovery...');

return; // Don't crash, try to recover

}

handleShutdown('uncaughtException');

});

// Retry mechanism added after production failures

export async function withRetry<T>(

operation: () => Promise<T>,

maxRetries: number = 3,

initialDelay: number = 1000

): Promise<T> {

for (let attempt = 0; attempt < maxRetries; attempt++) {

try {

return await operation();

} catch (error: any) {

// Check if error is retryable

const isRetryable =

error.code === 'P2024' || // Connection pool timeout

error.code === 'P1001' || // Can't reach database

error.message?.includes('Connection reset');

const delay = initialDelay * Math.pow(2, attempt); // Exponential backoff

console.warn(`Database operation failed (attempt ${attempt + 1}/${maxRetries})`);

await new Promise(resolve => setTimeout(resolve, delay));

}

}

}// Multiple files have this pattern, added after production bugs

// BEFORE (buggy):

windowMs: parseInt(process.env.RATE_LIMIT_WINDOW_MS || '900000')

// AFTER (fixed):

windowMs: Number.isNaN(parseInt(process.env.RATE_LIMIT_WINDOW_MS || '900000'))

? 900000

: parseInt(process.env.RATE_LIMIT_WINDOW_MS || '900000')

// Why? Because parseInt('') returns NaN, not the default value

// And NaN milliseconds breaks everything silentlyThe above bug hurt personally. parseInt('') returns NaN. Not zero. Not an error. NaN. And when you tell your rate limiter that the timeout should be "NaN milliseconds" it side eyes you and throws an error that will destroy your confidence in the CS degree you have.

I found this one specifically at 2 AM after seeing a user successfully made 6,789 requests in an hour.

What Would I Do Differently?

Start With Encryption

Simpler Rate Limiting Right Away

Less Ambitious Database Schema

Compliance As A Feature NOT An Afterthought

Encryption from day one is a requirement, not a nice to have. Not because I knew I would need it, but because trying to encrypt live data while in production is 1. scary and 2. potentially costly (in user data and time). The cost of retroactively adding is stress and days of meticulous testing, backing up data and restoring, and more testing.

I would start with three rate limiters MAX. 1. Auth (strict), api (moderate), and uploads (file size aware). The 15+ rate limit spaghetti code that came was a result of over-engineering and ultimately most of those edge cases never happened.

116 tables. 116 tables! "Dear past Tyler, You don't need a table for SmartHomeConnection, MealPlanningConnection, GeofenceReminder. At least not to start." I had tables for features that were merely dreams, whisps of text on a napkin, nothing that had substance. Keep a clean database and only add when you need to.

GDPR and COPPA are not jokes. They are not something to say "I will get to that later... I need to add a red blinking light for no reason." If you are launching in a region that has laws, read them first, comply to them before writing code. Ensure you know what you are doing. This is not a "do first, ask permission later." I was lucky that just before I pushed publish that I realized these things, but this is a feature not a nice to have. Retrofitting compliance meant touching nearly every route in the application.

What Solo Development Taught Me

Developing by yourself is sometimes what happens when you have an idea that you have to have an MVP for in order to get other devs onboard. The database decisions I made in week one affected the API response time I was trying to solve in week five. When you own the whole stack, you have to stop thinking in silos. That is the most important skill I bring to client work now.

47 Days Later

So here we are. A shipped product. A tired developer. And an app that lives in the real world. The scariest part of programming, art, and life is handing over something you made and saying, "yeah I made this... just don't break it or hate it." Here is the reality, people will break it, people will find edge cases, and some people might not like it or prefer other options. One important lesson I learned in my "Human and Computer Interaction" class is this:

If a user cannot understand how to use a feature or gets confused by something, it is easy for a CS major or someone who knows computers to say "well the user is just dumb." That is not true. If there is a bug, a confusing feature, or something that prevents the user from doing what they want it is the programmer that didn't program it right.

The Deliverable

Despite everything though, I made something and I put it out into the world. Favory is live. Not a prototype. Not a "beta" with a halfway thought through codebase. A fully functioning wishlist app with:

AI powered gift ideas that actually make sense

Universal imports

A group and Secret Santa system that allows for meaningful gift giving

User accounts, friend connections, and shared wishlists

Privacy by default

Real users signed up, created lists, coordinated gifts, and ran Secret Santa exchanges without texting me something broke... well at least the number of texts have died down.

User Feedback

Amazing App

this app makes me feel organized and helps me keep my lists orderly for important things.

Five Stars

eson14

Very User Friendly

Super convenient and easy to use

RJO

What I Learned About Shipping Fast

Honestly? 47 days was kind of silly. But it taught me more than any tutorial or course. Here is what stuck:

Constraints force clarity. When you don't have time to build everything you have to decide what you do have time for. You have to figure out what matters most to users and move forward. Features that work > a limitless void of ideas.

"Good enough" is a feature. Perfectionism kills projects after burnout sets in. Knowing when something is "good enough" and ready to ship is more important than polishing it for your own comfort. And honestly, polishing it over and over again will introduce more bugs than you intended and has the inverse effect of what you want.

You learn the full stack by owning the full stack. Some projects you work on, especially for big companies, means you won't own the full stack. You might be someone who works on one part of it, but that doesn't mean you can't own the full stack. Learn about what you are coding and how it interacts with other parts of the stack. Make it your problem when something breaks, even if it isn't part of the stack you work on.

Shipping is the real test. Code running on localhost is as good as a pizza that is sitting in a pizza shop. It never achieves its full potential or intended purpose. It is when you put your product in the hands of real users that you know you have built something solid.

What This Means for Client Work

Okay, I have been selfish this whole article. I am not just writing this story to brag about a side project (even though I have).

This is the experience and care I bring to every freelance engagement. When I engage with client's projects, they get someone who has:

Imagined something that I wanted in the world

Architected and shipped a complete product under constraints

Made difficult decisions to ensure the end user got what they expected (not just what I wanted)

Debugged production issues at 2 AM because there was no one else to call

Learned what it means to meet deadlines that are not negotiable

I become invested in every project I work on. It may not be my brainchild, but it is something that I pour myself into.

Whether it's a web app, a mobile product, or a custom tool--I have done this before. I can do it again. I can do it for you.

Your Turn

That's it. That's the Favory story. 47 days from empty repo to deployed product. Good decisions, questionable 2 AM debugging, and everything in between.

If you made it this far, you're probably one of two people:

A Developer: Someone who wanted to see how someone else approaches a problem of this size and scope. Hopefully you found something useful here. Maybe a technique to try, a mistake to avoid, or just solace that your own late night debugging sessions aren't just a hallucination.

Someone with a project and dream: You want something built that will make the world a better place. Maybe you have been burned by large agencies that over promise and under deliver. Maybe you are tired of cookie cutter solutions that don't quite solve the problem you have in mind. Maybe you just want to work with someone who has built something.

Either way, I'd love to hear from you!

Get in Touch

Have a project in mind? I build custom web apps, mobile apps, and software tools that solve the problems that you face. No templates. No page builders. Just clean code that does exactly what you need.

Get in Touch

Have questions? Send me a message and I'll respond within 24-48 hours.

More Posts Coming

This is the first deep-dive on this blog but it won't be the last. If you want to see how I approach other builds or hear my thoughts on:

How I built this site

Custom Code vs. Page Builders: When Each Makes Sense

From Company Engineer to Freelance Developer: What I Have Learned (so far)

Stick around!

Thank you for reading,

Tyler Kurt Gordon

How did you find this post?

Comments